In late February 2026, global chip giant NVIDIA announced during its earnings conference call that it has begun delivering the first samples of its next-generation AI data center platform, Vera Rubin, to select customers. This news marks the official entry of this highly anticipated AI supercomputing platform into the deployment phase, paving the way for subsequent mass production and rollout.

Technical Specifications: Six Chips Forming a Complete AI Factory

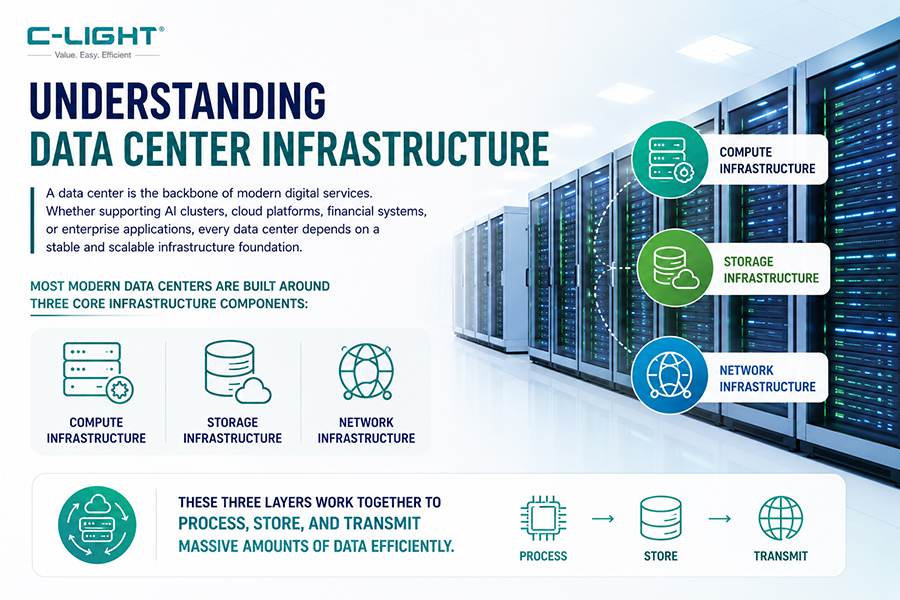

The Vera Rubin platform is not merely a single-chip upgrade but a complete AI system architecture composed of six highly specialized and collaborative work key chips. This signifies the formal evolution of AI computing from individual GPUs to the era of rack-scale systems engineering.

Core components of the platform include:

Vera CPU: Features 88 custom NVIDIA-designed Armv9.2 "Olympus" cores, optimized for next-generation AI factories.

Rubin GPU: Each GPU integrates 224 Streaming Multiprocessors and is equipped with 288GB of HBM4 high-bandwidth memory. A single Rubin GPU can achieve approximately 50 PetaFLOPS of FP4 compute performance.

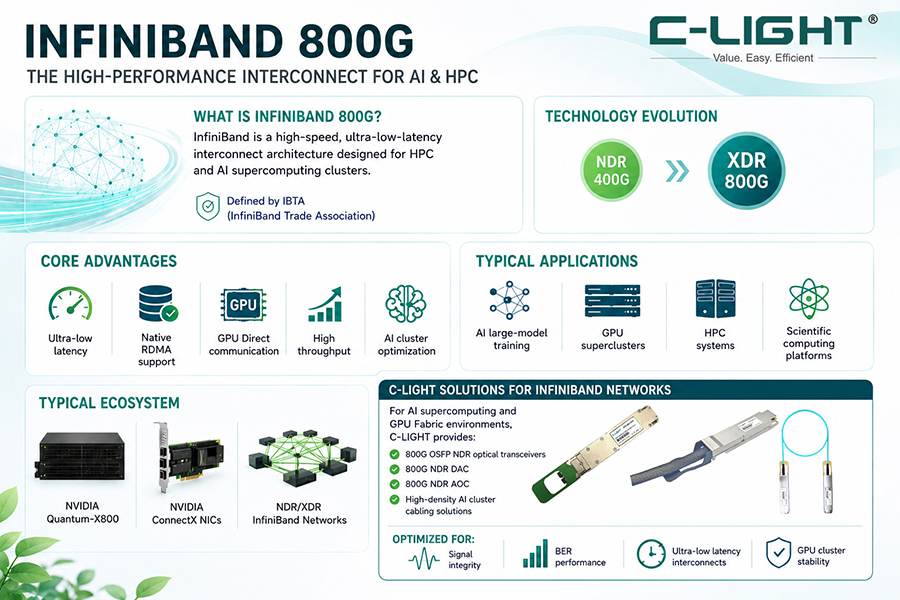

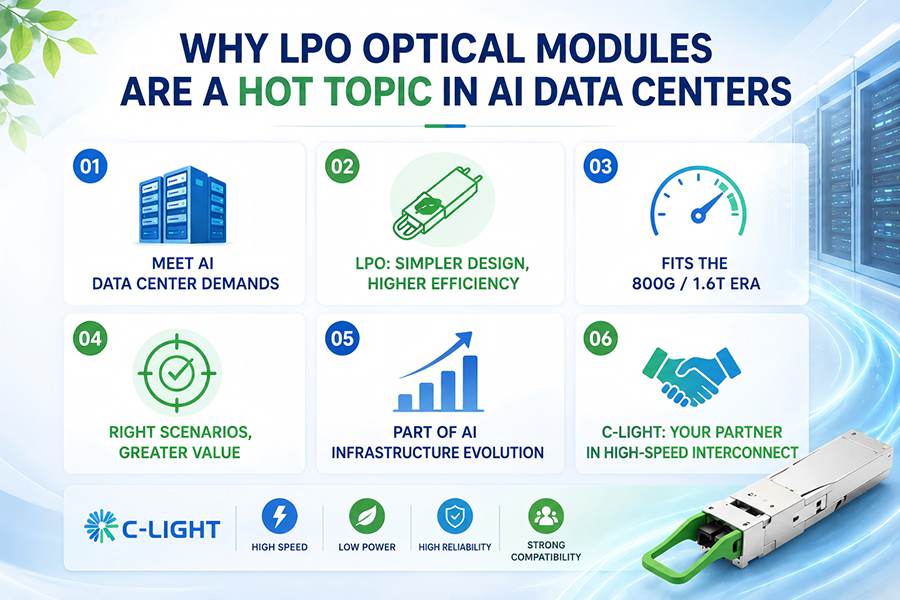

Interconnect and Networking Chips: Including the NVLink 6.0 switch ASIC (providing 3.6TB/s bandwidth) for rack-scale expansion, the BlueField-4 Data Processing Unit (DPU), the Spectrum-6 Photonic Ethernet switch, and the 1.6Tb/s Quantum-CX9 Photonics InfiniBand network interface card (NIC).

At the system level, the complete Vera Rubin NVL72 rack integrates 72 Rubin GPUs and 36 Vera CPUs. It comprises a total of 1.3 million components and weighs nearly two tons. Dion Harris, head of NVIDIA's AI infrastructure, revealed while showcasing a complete Vera Rubin rack at the California headquarters that the new system is NVIDIA's first platform designed with full liquid cooling. Its performance per watt can be up to 10 times higher than the previous generation Grace Blackwell platform.

Performance Advantages: Significant Reduction in Inference Costs

In terms of performance, the Vera Rubin platform represents a substantial leap compared to its predecessor. Compared to Blackwell, the inference cost on Vera Rubin can be reduced by approximately ten times, with simultaneous improvements in training and inference efficiency. Sources indicate that the inference performance of the Rubin NVL72 system could be five times that of Blackwell.

This improvement stems from multiple technological breakthroughs. The Rubin GPU incorporates the third-generation Transformer Engine, specifically designed to accelerate the speed and efficiency of large language models and agentic AI. Furthermore, for the first time, the platform utilizes TSMC's 3nm process technology and introduces Chiplet design and a reticle-size design, combined with HBM4 memory, achieving significant power efficiency gains.

NVIDIA states that for specific AI training scenarios, the Rubin platform may require only a quarter of the number of GPUs compared to Blackwell to complete the same task. This suggests that large AI model builders can continue expanding their computing power while managing costs.

Industry Chain Layout: Global Supply Chain Synergy

To support the launch of the Vera Rubin platform, NVIDIA's partners are actively advancing software and hardware adaptation and upgrades. Prominent AI server hardware manufacturers have already received physical chip samples and have begun system development.

Notably, NVIDIA has adopted a highly integrated delivery strategy. Market rumors suggest the company plans to deliver pre-assembled L10 VR200 compute trays, complete with Vera CPUs, Rubin GPUs, cooling systems, and interfaces, directly to partners. Different partners will receive different components of the platform, while some manufacturers will obtain the fully integrated NVL72 VR200 complete racks.

From a supply chain perspective, Vera Rubin is a truly global project. The core chips are primarily produced by TSMC, while the complete system components come from at least 80 suppliers across 20 countries. A single rack alone requires 5,000 copper cables to connect the entire system, totaling approximately two miles in length.

In the advanced packaging stage, TSMC's CoWoS-L packaging technology is key to enhancing computing efficiency. As demand continues to grow, TSMC is expanding its outsourcing layout for packaging and testing, with companies like ASE Group and KYEC potentially benefiting from order spillovers.

Customer Ecosystem: Cloud Giants Prepare Early

Based on customer feedback, major cloud service providers have already shown strong interest in the Vera Rubin platform. It is anticipated that all major cloud service providers will deploy the Vera Rubin platform.

Meta has publicly stated its plans to adopt the Rubin architecture in future data center construction. Additionally, tech giants such as OpenAI, Anthropic, Amazon, Google, and Microsoft are considered potential customers for the platform. On February 27th, OpenAI announced it had secured $110 billion in funding, with investors including NVIDIA, Amazon, and SoftBank, funds intended to expand AI infrastructure construction.

During the earnings call, NVIDIA CEO Jensen Huang emphasized that the five major cloud service providers and hyperscale customers collectively contribute slightly more than 50% of the company's data center revenue. Sovereign AI business grew more than threefold year-over-year in fiscal year 2026, exceeding $30 billion, primarily driven by customers in countries like Canada, France, and Singapore.

Competitive Landscape: Multi-Vendor Strategy Becomes a Trend

While solidifying its technological leadership, NVIDIA also faces an increasingly complex competitive environment.

Large cloud customers are seeking to reduce dependence on a single chip supplier. Earlier, Meta announced an expanded strategic partnership with AMD, planning to deploy data center equipment based on AMD processors with a capacity of 6 gigawatts over the next five years, while also purchasing up to 10% of AMD's shares. Previously, Anthropic also announced plans to deploy up to one million of Google's custom TPU chips, a deal valued at tens of billions of dollars.

AMD is planning to launch a product named Helios to compete with Vera Rubin and has already secured a large order from Meta. Amazon and Google are also aggressively promoting their custom AI chips, with more and more customers beginning to adopt a multi-vendor strategy.

Future Outlook: Physical AI as the Next Growth Curve

Amidst short-term fluctuations and long-term competition, NVIDIA is cultivating new growth points by investing in next-generation technologies.

In the conference call, Huang reiterated that global data center capital expenditure is expected to reach between $3 trillion and $4 trillion by 2030, driven primarily by the explosive demand for agentic AI and physical AI. He revealed that physical AI contributed over $6 billion in revenue to NVIDIA this fiscal year.

In the robotics sector, the Jetson Thor platform, based on the Blackwell architecture, can deliver AI compute performance of up to 2070 FP4 TFLOPS. Huang believes the "ChatGPT moment" for robotics development has arrived, with autonomous ride-hailing mileage growing exponentially. Commercial fleets from companies like Waymo, Tesla, and Uber are expected to expand from thousands to millions of vehicles within the next decade.

Looking further ahead, NVIDIA is already planning iterations beyond Vera Rubin. A prototype for the next-generation large-scale rack, Kyber, has been unveiled. The new rack will increase the number of GPUs from 72 to 288 and is expected to launch next year. Huang hinted at the CES show that more undisclosed chips might be revealed at the GTC 2026 conference.

With the delivery of the first Vera Rubin samples, NVIDIA has once again demonstrated to the market its iteration speed and system integration capabilities in the AI computing infrastructure domain. From a single GPU to a complete AI factory with six collaboration chips, from air cooling to full liquid cooling, from training to inference to physical AI, the chip giant is attempting to build a formidable, hard-to-replicate system-level moat.

However, capital market skepticism, customers' multi-vendor strategies, and geopolitical uncertainties all remind market participants that even on the golden track of AI computing, a smooth path is not guaranteed. The mass production and deployment of Vera Rubin will be a crucial observation window to test whether the "compute is revenue" logic can consistently deliver on its promise.

TEL:+86 158 1857 3751

TEL:+86 158 1857 3751

>

>

>

>

>

>

>

>

>

>

>

>