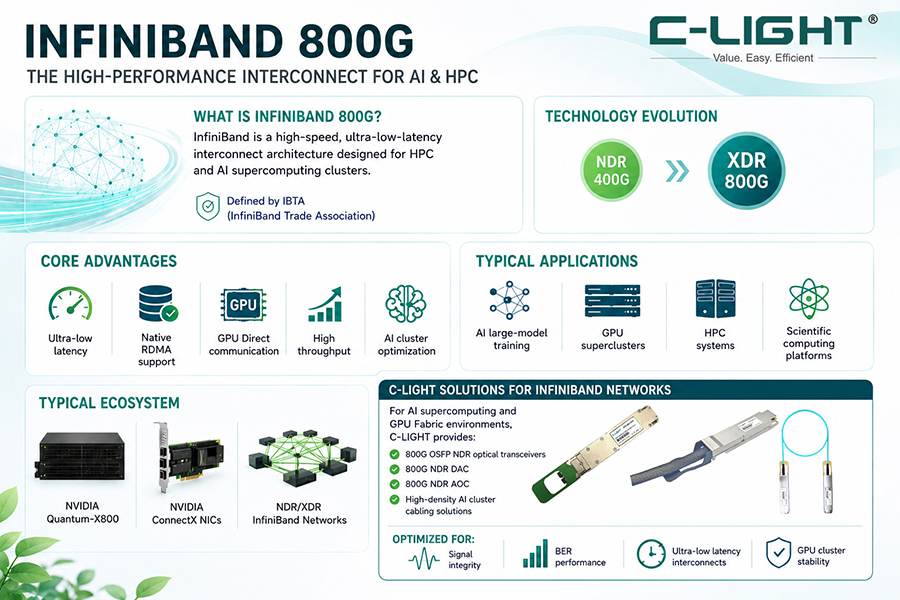

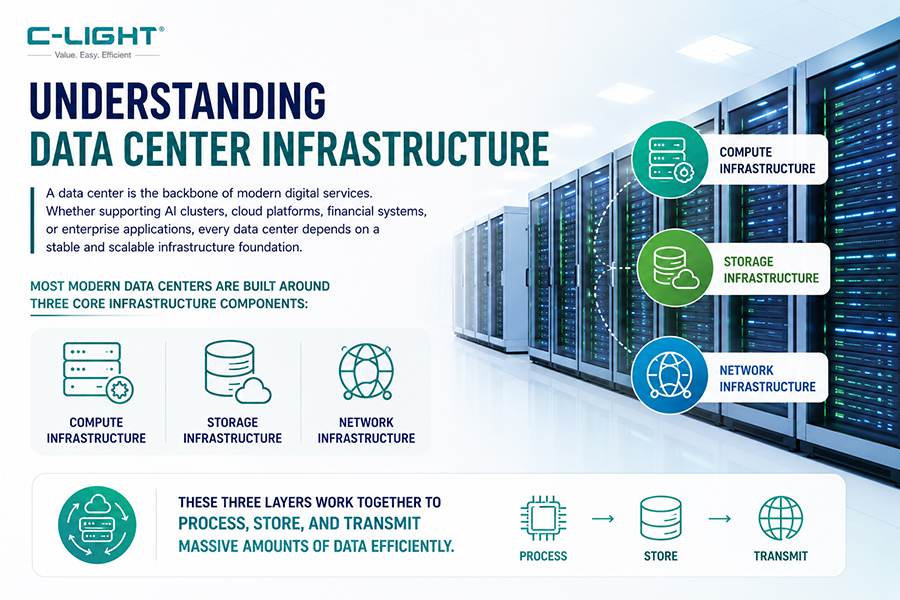

Driven by the explosive growth of AI computing, modern data centers are evolving from being “storage-centric” to becoming “compute- and interconnect-centric.” Whether supporting large-scale AI training clusters or inference platforms, high-speed and low-latency connectivity has become one of the most critical factors determining overall system performance.

Among various interconnect technologies, DAC (Direct Attach Copper) and AOC (Active Optical Cable) have become indispensable infrastructure components for AI data centers.

1. The Real Bottleneck in AI Data Centers: Not Just Computing Power

Traditionally, GPU and CPU performance were considered the primary drivers of AI capability. However, in modern AI clusters, a more practical challenge has emerged:Data transmission speed is often unable to keep up with computing power growth.

In large-scale AI model training environments, where thousands of GPUs operate in parallel, parameter synchronization processes such as All-Reduce communication occur continuously. If network latency is high or bandwidth is insufficient, it can directly result in:

Lower GPU utilization

Significantly longer training times

Increased power consumption and operational costs

This is why the concept of “Network is the Computer” has become increasingly relevant in the AI era.

2. DAC and AOC: The Two Core Interconnect Technologies for AI Infrastructure

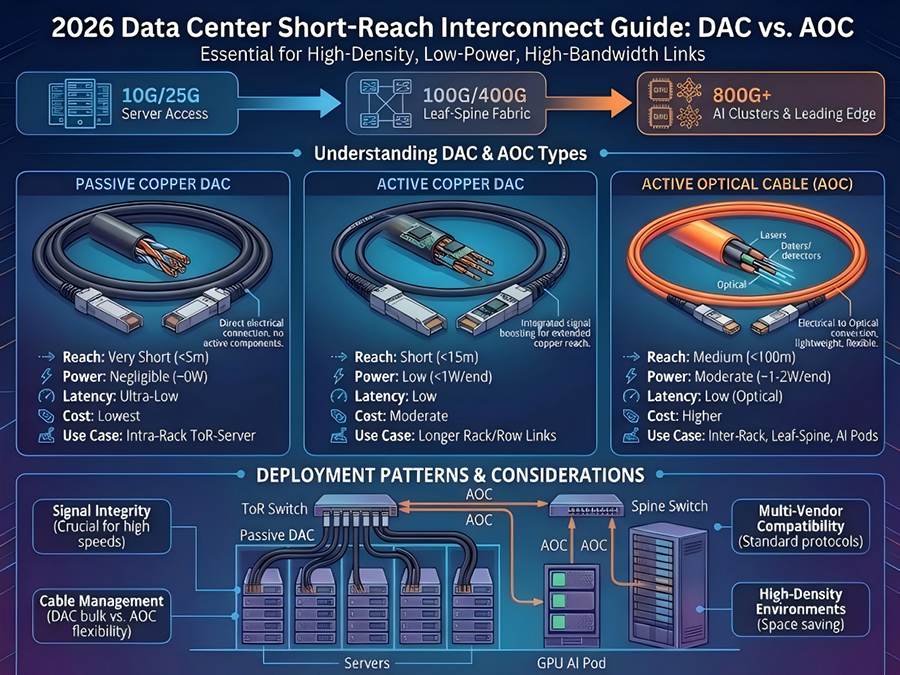

2.1 DAC (Direct Attach Copper)

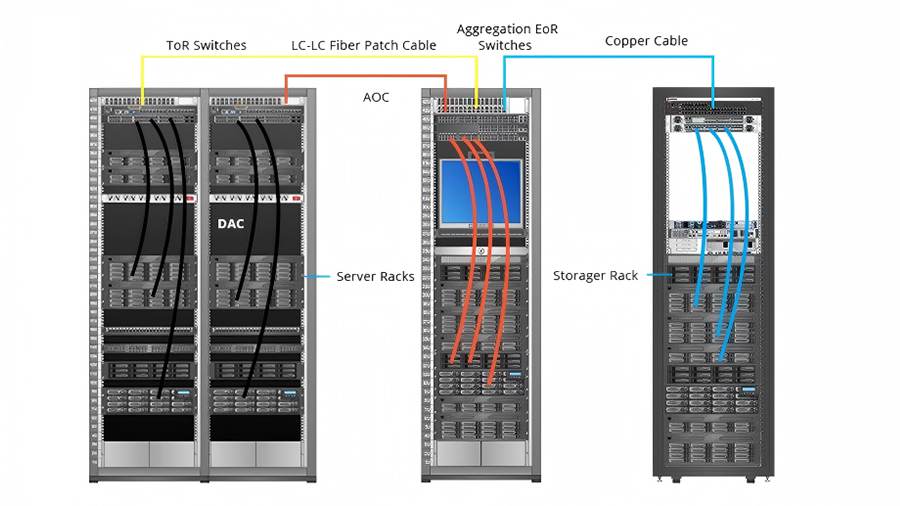

DAC is a low-cost, low-power short-distance interconnect solution commonly used inside racks or between adjacent racks.

Key Advantages of DAC

Ultra-low latency with minimal active components

Extremely low power consumption without optical conversion

Cost-effective deployment

High reliability and stability

Typical DAC Applications

ToR switch to server connections

Internal GPU server interconnects

Short-range high-speed links (≤3–5 meters)

10G / 25G / 100G / 400G connectivity

In AI clusters, DAC serves as the foundational backbone for high-density and cost-efficient networking.

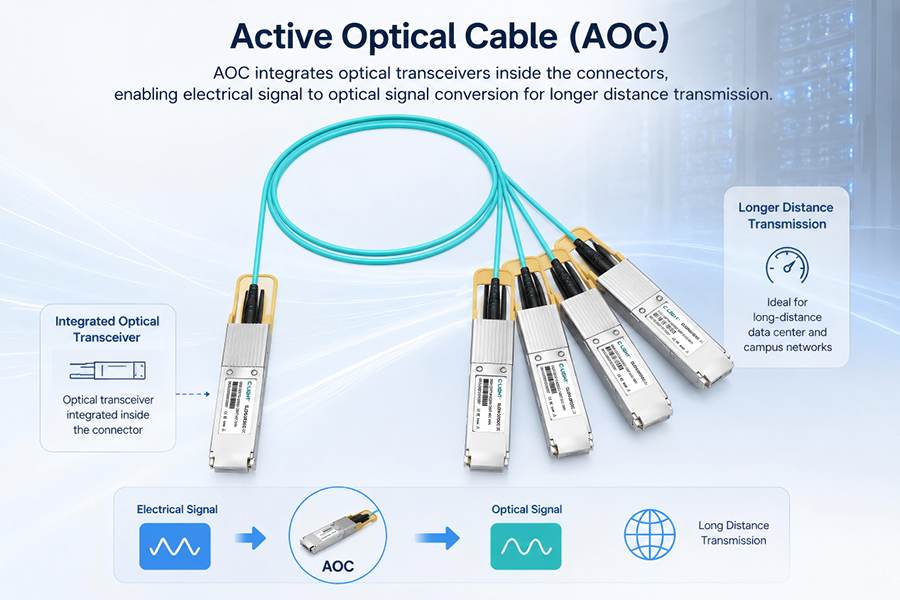

2.2 AOC (Active Optical Cable)

AOC integrates optical transceivers within the cable assembly, enabling electrical-to-optical signal conversion for longer-distance transmission.

Key Advantages of AOC

Longer transmission distance (up to 100 meters or more)

Strong resistance to electromagnetic interference (EMI)

Better scalability for 400G/800G and beyond

Ideal for complex cabling environments

Typical AOC Applications

Inter-rack connectivity in Leaf-Spine architectures

Cross-cabinet GPU cluster communication

High-speed switch-to-switch interconnects

AOC acts as the critical bridge for high-bandwidth, long-distance AI networking.

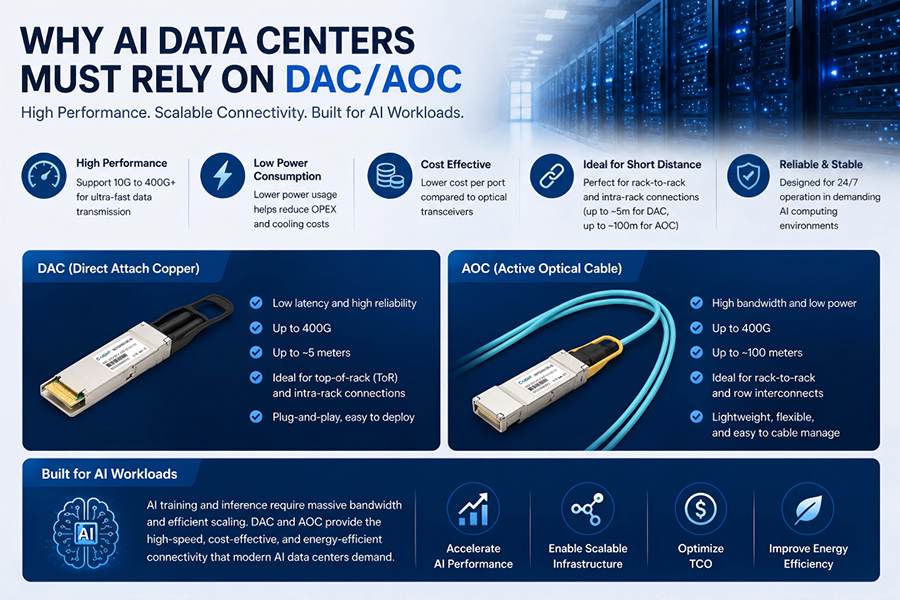

3. Why AI Data Centers Must Rely on DAC and AOC

3.1 Supporting Extreme Bandwidth Demands (100G → 400G → 800G)

AI workloads are driving exponential growth in network bandwidth requirements.

Examples include:

GPT-scale model training requiring Tbps-level throughput

GPU-to-GPU communication demanding non-blocking networks

DAC and AOC support both current and next-generation transmission rates, including 400G and 800G, making them practical solutions for AI networking infrastructure.

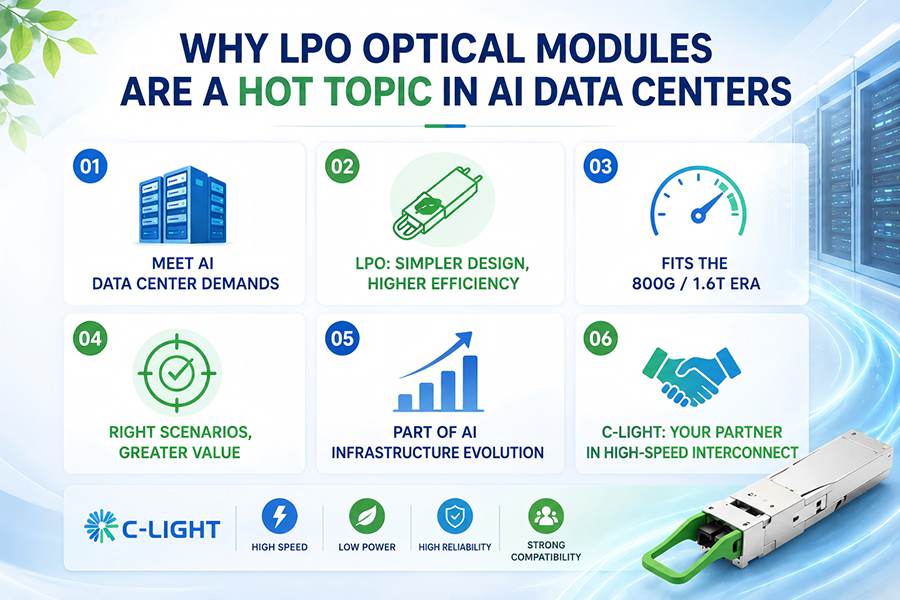

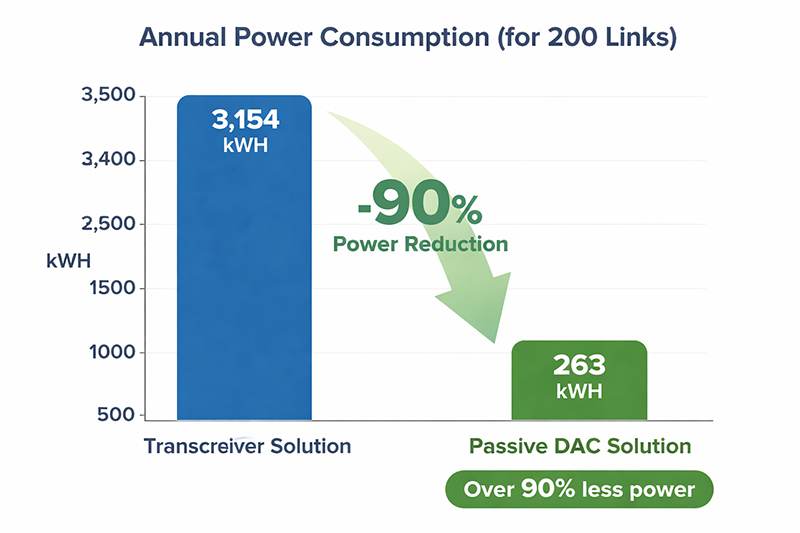

3.2 Reducing Power Consumption and Thermal Pressure

AI data centers are already extremely power-intensive. Excessive interconnect power consumption can significantly increase cooling and operational challenges.

Power Efficiency Benefits

DAC adds almost no additional power overhead

AOC is more power-efficient than traditional optical transceiver + fiber combinations

At hyperscale deployment levels, this directly impacts TCO (Total Cost of Ownership).

3.3 Simplifying Large-Scale Deployment

Compared with traditional “optical module + patch cord” solutions:

DAC and AOC are integrated plug-and-play solutions

No additional optical tuning is required

Fewer failure points improve operational reliability

For AI clusters with thousands of network ports, simplified deployment and maintenance are essential.

3.4 Enabling High-Density GPU Cluster Architectures

Modern AI data centers commonly adopt:

Spine-Leaf architectures

GPU POD architectures

Massive parallel computing clusters

These environments are characterized by explosive growth in connection density.

Combined DAC + AOC Architecture Benefits

DAC handles short-range high-density connections

AOC supports inter-rack scalability

Together, they provide:

Optimized cost efficiency

Maximum network performance

Flexible infrastructure scalability

3.5 Achieving the Best Balance Between Cost and Performance

| Solution | Cost | Power Consumption | Distance | Bandwidth | Typical Application |

DAC | Low | Extremely Low | Short | High | In-rack connections |

AOC | Medium | Low | Medium | Very High | Inter-rack networking |

Optical Transceiver + Fiber | High | Higher | Long | Extremely High | Backbone networks |

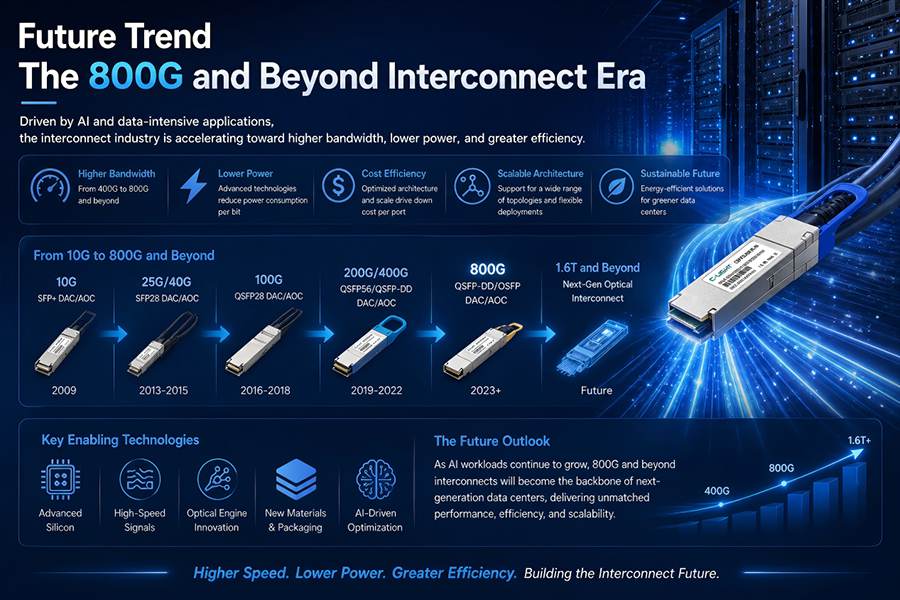

4. Future Trends: The Era of 800G and Beyond

As AI model sizes continue to grow, interconnect technologies are rapidly evolving.

Key trends include:

Accelerated adoption of 800G DAC and 800G AOC

Silicon Photonics enabling higher integration density

The gradual emergence of CPO (Co-Packaged Optics)

However, one thing is clear:

DAC and AOC will continue to coexist and evolve together for the foreseeable future.

They remain the most practical, scalable, and cost-effective interconnect solutions for AI data center infrastructure.

In the AI era, networking performance is no longer secondary to computing power — it is a core part of the computing system itself.

DAC and AOC technologies provide the low-latency, high-bandwidth, energy-efficient, and scalable connectivity required by modern GPU clusters and AI infrastructures. From 100G to 800G and beyond, they will continue to play a critical role in enabling next-generation AI data centers.

TEL:+86 158 1857 3751

TEL:+86 158 1857 3751

>

>

>

>

>

>

>

>

>

>

>

>